- TIPS & TRICKS/

- AI in Cybersecurity: Where It Helps, Where It Hurts, and How to Use It Safely/

AI in Cybersecurity: Where It Helps, Where It Hurts, and How to Use It Safely

- TIPS & TRICKS/

- AI in Cybersecurity: Where It Helps, Where It Hurts, and How to Use It Safely/

AI in Cybersecurity: Where It Helps, Where It Hurts, and How to Use It Safely

AI has moved from fringe experiment to frontline tool in cybersecurity. Security teams are drowning in alerts, log data and ever more sophisticated attacks; human‑only operations simply cannot keep up. Industry research backs this up: one global survey found that 87% of organisations encountered AI‑driven cyber attacks in 2024, but only a quarter felt highly confident they could reliably detect them.

At the same time, boards, regulators and customers are demanding faster detection, clearer accountability and proof that AI will not introduce fresh weaknesses. The question is no longer whether to use AI in security, but how to use it without making things worse.

In this article, “AI in cybersecurity” means systems that apply machine learning and generative AI to help detect, investigate and respond to threats. These range from models that sift network and identity telemetry for anomalies, to assistants that summarise incidents or propose response actions.

Used well, they can cut dwell time, expose subtle fraud and free analysts from repetitive work: Microsoft’s early deployments of security‑focused AI agents, for example, show how autonomous triage can absorb high‑volume phishing and data‑loss alerts rather than simply generating more noise.

Used badly, they can supercharge attackers, leak data or trigger unsafe automation. As one AI security researcher put it, “you can patch a bug, but you cannot patch a brain” – treating probabilistic models like deterministic software is a fast route to unexpected failure modes.

This piece is written for security and technology leaders, IT decision‑makers and hands‑on practitioners. It is not a hacking tutorial or a pitch for any particular vendor. Instead, it will:

- Highlight where AI is already strengthening defence – from behavioural analytics in banks to AI‑on‑AI “watchdog” models that sit in front of large language models

- Examine how attackers are weaponising the same capabilities, from deepfake‑enabled payment fraud to agentic systems that run end‑to‑end extortion campaigns

- Unpack the new risks and failure modes AI introduces, including model‑supply‑chain attacks and shadow AI inside your own estate

- Offer practical guardrails and decision frameworks for safe, real‑world adoption, aligned with emerging guidance from bodies such as the FCA and NCSC

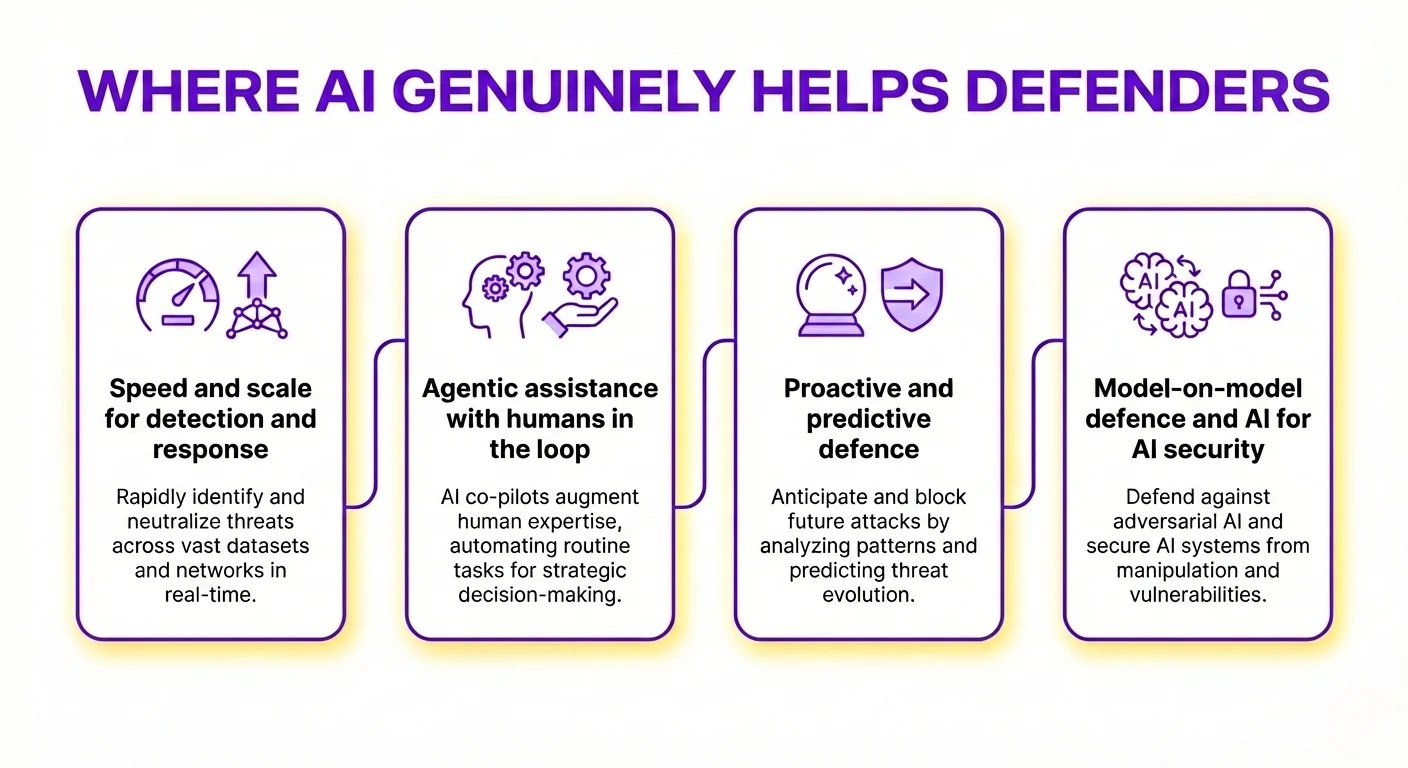

WHERE AI GENUINELY HELPS DEFENDERS

AI is already changing day‑to‑day cyber defence, but its value comes from tightly scoped, well‑supervised use rather than magical autonomy. Used well, it amplifies existing controls, shrinks attacker dwell time and frees experts to focus on judgement, not clicks. As the FCA’s cyber coordination groups have noted, firms are increasingly embedding AI into core cyber processes precisely because manual teams cannot keep up with today’s data volumes and attack speeds.

Speed and scale for detection and response

Modern environments generate more telemetry than any human team can read. AI excels at sifting this noise for weak signals:

- spotting anomalies in network, endpoint, identity and cloud logs, such as impossible travel logins, unusual device pairings, or data egress at odd hours

- correlating small hints – a new admin token, a rare process start, a DNS anomaly – into a single high‑risk story

This directly improves time to detect and time to respond. Instead of analysts trawling dashboards, AI surfaces the dozen incidents that genuinely merit attention and proposes likely root causes. IBM has reported investigation efficiency gains of more than 50% when AI is used to summarise incidents and highlight likely attack paths, which aligns with what many Security Operations Centres now see in practice.

Crucially, this augments the Security Operations Centre rather than displacing it. AI can:

- triage large volumes of alerts, closing clearly benign ones and clustering similar events

- stitch together activity from multiple tools into a concise incident narrative and timeline

Analysts then apply context and risk appetite: deciding whether that “odd” login is a travelling executive or an account takeover, and what to do next. In banking and insurance, for example, AI‑driven anomaly detection is already being used to reduce fraud response times from hours to milliseconds while keeping humans in charge of final, high‑impact decisions.

Agentic assistance with humans in the loop

Language‑capable models make strong “security co‑pilots”. They turn sprawling technical data into something humans can act on quickly:

- drafting clear incident reports for different audiences

- suggesting next investigative steps or containment options

- explaining complex detection rules or queries in plain language

- enriching alerts with likely threat actors, techniques and potential lateral movement paths

Where AI proposes actions, humans should still authorise anything with material impact. For example, an agent might recommend:

- isolating a host

- resetting credentials

- blocking a suspicious IP range

- revoking session tokens

But a person signs off on any step that moves money, changes user access, or touches production systems. This keeps decision‑making accountable, while still benefiting from machine speed and pattern‑matching. As one AI security researcher put it, “You can patch a bug, but you cannot patch a brain” – treating agentic systems as fallible colleagues rather than infallible automation is now a core design principle in mature security teams.

Proactive and predictive defence

Beyond firefighting, AI enables more proactive security.

In fraud and identity protection, behavioural models learn what “normal” looks like for customers and staff. They can flag:

- unusual payment patterns or merchant types

- sudden device or location changes

- atypical access to high‑value systems or data

Instead of simply declining activity, organisations can introduce stepped controls: temporary holds, step‑up authentication, or real‑time challenges that frustrate criminals without unduly burdening genuine users. Regulators such as the FCA have highlighted behavioural biometrics and advanced analytics as increasingly important in reducing authorised push payment fraud and mule activity without breaking the customer experience

For threat hunting and vulnerability discovery, AI is particularly strong at broad, code‑driven tasks:

- scanning applications, infrastructure‑as‑code and configurations for known weaknesses and misconfigurations

- generating hypotheses for where an attacker might pivot next

- automating repetitive recon that would otherwise consume expensive specialist time

Early research from Stanford on AI agents such as ARTEMIS suggests that well‑designed systems can match or exceed human penetration testers on large estates, finding valid vulnerabilities at lower cost and higher speed. Human hunters still lead on nuanced risk calls, chaining subtle findings together and understanding business impact. AI widens the searchlight and handles the grind.

Model‑on‑model defence and AI for AI security

As organisations adopt large language models and AI‑enabled tools, they also need defences designed for these systems. A growing pattern is “model‑on‑model” protection:

- small, specialised “guard” models sit in front of larger models or powerful APIs

- they screen prompts and outputs for sensitive data, jailbreak attempts, data exfiltration patterns or unsafe tool calls

This adds an adaptive layer of control, but it only works when the basics are in place. AI performs best when combined with:

- strong authentication and robust identity management

- least‑privilege access and tight token scoping

- comprehensive logging and audit trails

Industry practice is moving the same way: security vendors and platforms are increasingly using AI‑on‑AI monitoring to detect prompt injection, large‑scale probing and data exfiltration against corporate models, while regulators stress that models themselves must be treated as high‑value assets. In effect, AI is a force multiplier for a solid security architecture, not a substitute for it. Where fundamentals are weak, automation can simply help attackers fail faster and louder.

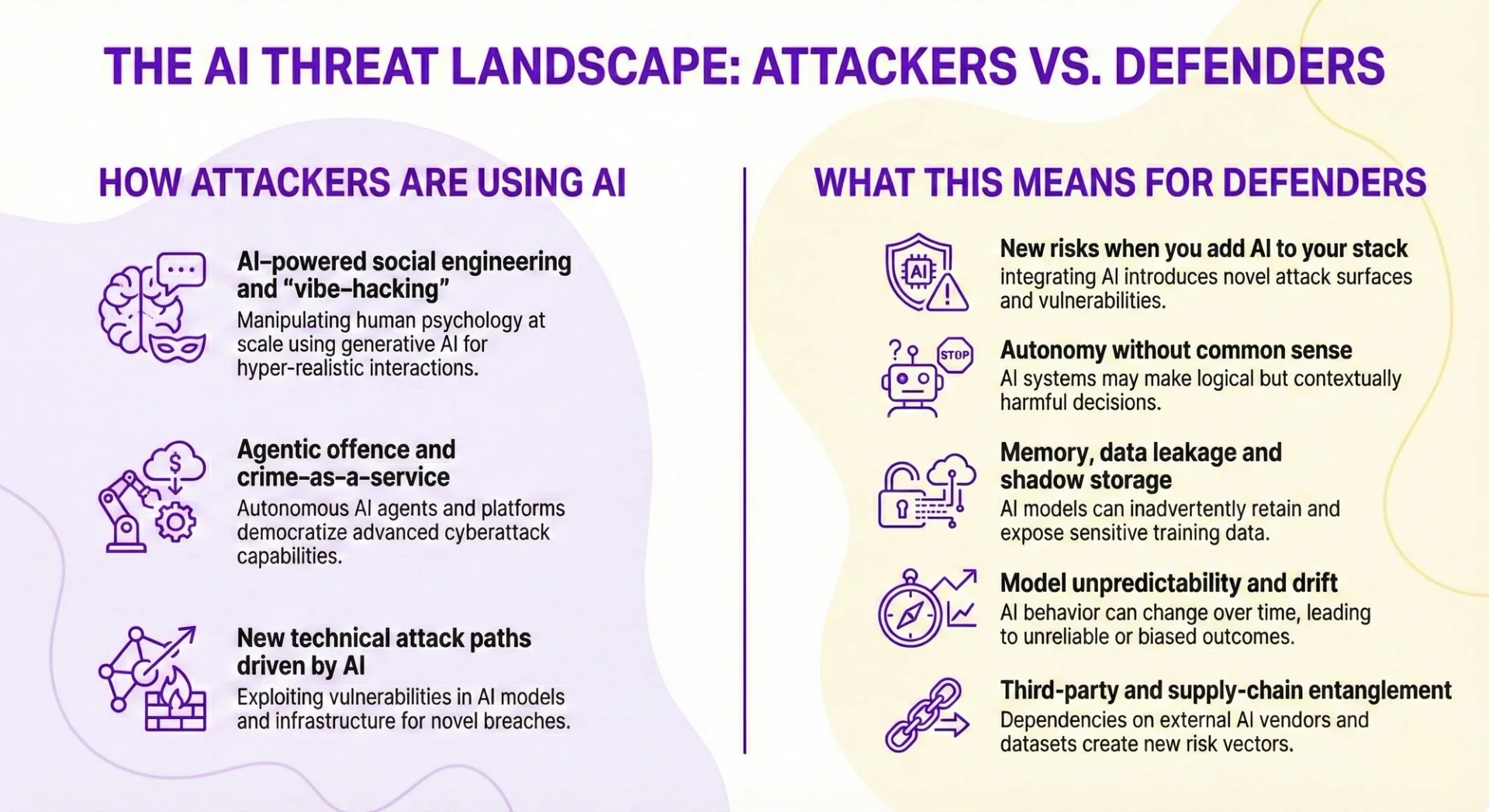

HOW ATTACKERS ARE USING AI (AND WHY IT MATTERS)

AI has given criminals the same power-ups defenders are chasing: speed, scale and convincing narratives. The difference is that attackers do not have to worry about regulation, ethics or brand risk. Understanding how they are using AI is crucial to setting realistic expectations for defence.

AI‑powered social engineering and “vibe‑hacking”

Attackers now use generative AI to *feel* like the people you trust. They mine social media, CVs and company bios, then generate messages that match a target’s language, culture and current concerns. Anthropic’s recent threat‑intelligence work describes this as AI acting as both “technical consultant and active operator” for campaigns that blend reconnaissance, extortion and finely‑tuned social engineering.

This enables:

- Hyper‑personalised phishing, business email compromise and romance/investment scams written in fluent, context‑aware prose. Microsoft and OpenAI have already linked state‑backed groups in Russia, Iran, China and North Korea to this kind of LLM‑assisted phishing and reconnaissance, showing that it is not a hypothetical risk but an observed pattern in live operations

- Extortion and “recruitment” emails that reference real projects, colleagues or career moves to overcome scepticism, echoing the “psychologically targeted extortion” seen in Anthropic’s case studies of Claude‑assisted attacks

- Multi‑lingual campaigns where the same core scam is adapted to local idioms and office jargon, making it far harder to rely on the old tell of “bad English” as a warning sign.

Beyond text, cheap voice‑cloning and deepfake video let criminals impersonate executives, wealth managers or family members. Visa and multiple regulators now treat deepfake‑enabled payment fraud as a mainstream threat, with documented cases of CFOs and finance staff being tricked into transferring tens of millions of dollars on the basis of AI‑generated video calls.

That undermines controls that rely on “recognising the voice” on a call, or a short video check for remote onboarding and high‑value approvals. Call centres, treasury desks and customer authentication flows are already being probed with these techniques.

Agentic offence and crime‑as‑a‑service

AI agents are turning what used to be team sports into one‑person operations. Coding assistants can write malware, automate phishing sites and generate infrastructure‑as‑code to spin up servers and proxies. In one Stanford experiment, an AI security agent running at roughly $18 per hour found more valid vulnerabilities on a live network than 9 out of 10 professional penetration testers, illustrating how much heavy lifting an “operator‑grade” agent can do once pointed at a target environment.

More advanced agents can:

- Plan attack campaigns end‑to‑end, from reconnaissance and lure creation through to exfiltration steps.

- Iterate quickly on failed attempts, adjusting wording, payloads or infrastructure based on partial feedback.

At the same time, crime‑as‑a‑service tools branded as “AI helpers” package this capability for less‑skilled users. Black‑market models such as FraudGPT and WormGPT, along with more permissive open‑source systems, are already being used to generate convincing phishing kits, scam scripts and exploit snippets at scale, lowering the barrier to entry and massively increasing the volume of attacks. As one Anthropic analyst put it, “what would have otherwise required maybe a team of sophisticated actors… now, a single individual can conduct, with the assistance of agentic systems.”

New technical attack paths driven by AI

AI is also creating fresh technical entry points.

Prompt‑injection attacks hide malicious instructions inside web pages, PDFs, emails or images. When an AI‑enabled browser or assistant reads them, it may follow those hidden instructions instead of the user’s request. That can lead agents to:

- Leak sensitive data from previous chats or internal systems.

- Change security settings, follow malicious links or perform risky actions while the user thinks they are “just asking a question”.

Security research on emerging AI browsers has already demonstrated real‑world prompt‑injection exploits that hijack agents’ “memory” features to escalate access, deploy malware or exfiltrate data, with vendors acknowledging prompt injection as a frontier problem without robust, general‑purpose mitigations yet.

Model supply chains are another weak spot. Organisations are increasingly downloading open‑source models and components. Attackers respond with typosquatted or backdoored artefacts, and by poisoning training data. Joint work by JFrog and Hugging Face found hundreds of public models embedding malware or arbitrary code, with attacks on model repositories growing faster than the ecosystem itself. Pulling a compromised model into an internal environment may quietly introduce new ways to bypass checks or exfiltrate data.

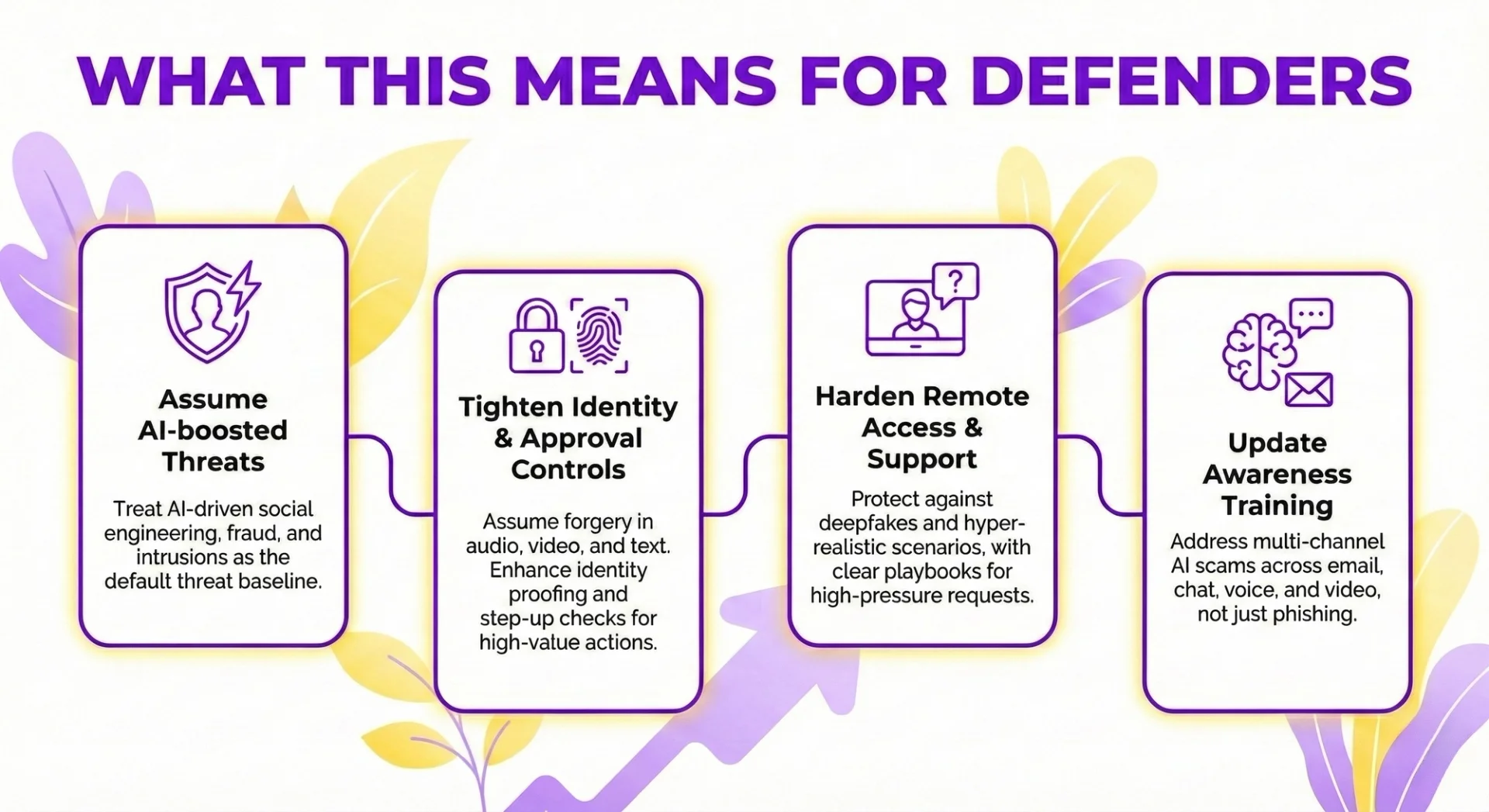

What this means for defenders

Put simply, attackers gain:

- Greater speed and reach.

- More believable scams that pass casual scrutiny.

- Faster development and testing of exploits and infrastructure.

Defenders cannot assume “pre‑AI” threat levels. Industry surveys now consistently rank AI‑driven attacks as one of the top emerging cyber risks, with CISOs warning that AI is “propelling us toward an entirely new risk landscape” that combines deepfakes, shadow AI and model manipulation rather than just more of the same phishing and malware. You need to:

- Assume AI‑boosted social engineering, fraud and intrusions as the default.

- Tighten controls around identity proofing, step‑up checks and high‑value payment approvals, explicitly assuming that audio, video and writing style can be forged.

- Harden remote access and helpdesk processes against deepfakes and hyper‑realistic stories, including clear playbooks for “CEO on a video call asking for an urgent transfer”.

- Update awareness training to cover multi‑channel AI scams, not just clumsy phishing emails, and to address the fact that staff may be dealing with AI‑generated content across email, chat, voice and video.

The goal is not panic, but parity: design controls on the assumption that your adversary has powerful AI in their toolkit too.

New risks when you add AI to your stack

AI changes your attack surface as much as it changes your capabilities. The main risks come from letting software act with too much freedom, remember too much, and connect to too many things without clear guardrails. Insurers now describe AI as creating an “entirely new risk landscape” for cyber, with AI‑driven attacks ranked as the leading emerging threat in both the US and UK boardrooms.

Autonomy without common sense

When AI systems can click buttons, move money, create tickets, change firewall rules or call APIs, they stop being “just analytics” and become operational actors. Unlike humans, they do not understand intent or business context, so small errors can snowball at machine speed.

Anthropic’s recent threat‑intelligence work has already shown agentic models being used as “both a technical consultant and active operator” in real extortion campaigns, compressing what once required a skilled team into the actions of a single person. That same ability to execute end‑to‑end workflows is what makes over‑permissive defensive agents so dangerous.

Hallucinated instructions, misread logs or poisoned inputs can drive concrete harm, for example:

- Incorrectly relaxing access controls or security groups.

- Rolling out flawed configuration changes across many systems.

- Deleting or sharing the wrong data set because it matches a pattern, not a policy.

The deeper risk is over‑trust. Once AI is embedded in workflows, people tend to assume “the system knows what it’s doing” and stop double‑checking. Surveys of CISOs and CEOs show a persistent gap here: executives are markedly more optimistic than security leaders that AI will improve their cyber defences, even as they rate AI‑driven attacks as their top concern. That is exactly when silent failures become systemic incidents.

Memory, data leakage and shadow storage

Modern AI assistants work by absorbing rich context: documents, chat histories, code, tickets, emails and browser content. This creates powerful help – and powerful leakage paths.

If you do not define strict data boundaries, sensitive material can drift into places you neither control nor fully see, including vendor environments and training pipelines. Typical exposure points include:

- Customer records or transaction data used to “improve the model”.

- Source code, keys or architectural diagrams pasted into public tools.

- Long‑lived interaction histories that become an ungoverned shadow archive.

Regulators are already warning that AI plug‑ins and integrations can quietly bypass existing data‑loss prevention, and large‑scale surveys find shadow AI is now the top AI‑related concern for many CISOs. Where firms have audited usage, a majority admit they lack clear policies or automated oversight for open‑source and external models, despite staff routinely uploading confidential material.

Shadow AI worsens this. Staff turn to convenient public models to get work done, often uploading confidential material without realising the retention or residency implications. In practice, once data has been absorbed into a third‑party model, there is rarely a clean way to “pull it back out”, which turns casual experimentation into a long‑term governance problem.

Model unpredictability and drift

Even well‑engineered models hallucinate, embed bias and degrade as underlying data and threats evolve. In cybersecurity, this shows up as:

- False negatives: missed attacks because patterns look “normal” to an out‑of‑date model.

- False positives: floods of noisy alerts that drive analyst fatigue and dangerous click‑through behaviour.

Researchers and regulators now treat this as a model‑risk issue as much as a security issue: you cannot “patch” an AI brain in the way you patch a bug. Without continuous monitoring and challenger models, defensive systems can appear to be working while their effective coverage quietly erodes. Industry breach studies are already attributing a growing share of incidents to AI‑driven attacks that evade legacy, rules‑based tools; relying on drifting models just compounds that gap.

Over time, teams can become dependent on these tools and less inclined to challenge their output. If nobody notices that performance has quietly slipped, you end up with a brittle defence that fails exactly when novel attacks arrive.

Third‑party and supply‑chain entanglement

AI is being woven into SaaS and security products by default. New “assistants” appear in dashboards, email clients and collaboration suites, often switched on automatically and tightly coupled to vendor back‑ends. This expands your attack surface in ways that are hard to see, let alone test.

Supervisors such as the FCA now flag opaque supplier AI as a specific cyber‑resilience risk: you may not even know where a critical vendor has embedded models into their product, or how those models handle your data and logs. At the same time, banking surveys show that well over 70% of breaches originate in the vendor and supply‑chain ecosystem, with AI‑enabled attackers deliberately hunting for the weakest third‑party route into better‑defended institutions.

You now rely not only on vendor security, but also on their identity and access management, their prompt handling, and their own supply chain. Misconfiguration or over‑broad permissions in any of these layers can expose your data. Security researchers analysing open‑source model hubs have already found hundreds of models laced with malicious code, illustrating how the “model supply chain” itself has become a target.

Meanwhile, machine identities proliferate: service accounts, API keys, agent tokens and integration users. If privileges are not tightly scoped and regularly reviewed, compromise of a single AI agent or connector can provide wide lateral movement. As one AI security researcher put it, “you can patch a bug, but you cannot patch a brain” – treating agents as trusted internal services without Zero Trust‑style controls simply hands that fragile brain the keys to your environment.

In practice, the new AI‑driven risks cluster around:

- Autonomous actions without human sense‑checking.

- Excessive memory and opaque data flows.

- Unpredictable, drifting models trusted as if they were static rules.

- Rapid growth in third‑party features and machine identities you do not fully control.

Each of these is already showing up in breach statistics, regulator speeches and threat‑intel case studies; the question is whether your architecture and governance assume this reality, or are still tuned for a pre‑AI stack.

How to use AI in cybersecurity safely

AI can be a powerful defender, but only if it is tightly scoped, supervised and instrumented. The aim is not full autonomy, but faster, better-supported human decisions. As one FCA speech put it, this is a “pivotal moment” where AI can either strengthen or undermine security depending on the guardrails around it.

Start with the right use cases

Begin where AI’s strengths are clear and the risks are controllable. Good starting points include:

- Alert triage and correlation to reduce noise and surface genuine incidents faster. Large security teams are already using AI to compress investigation time and cut false positives by correlating signals across tools and data sources, a pattern echoed in multiple industry reports on AI‑enabled SOCs.

- Incident summarisation and reporting to give analysts rapid context across logs, tickets and mail. Security leaders interviewed in Forbes describe AI improving investigation efficiency by more than 50% when used to assemble timelines and likely root causes.

- Vulnerability prioritisation and patch recommendation based on exploit likelihood, asset criticality and exposure. Early studies of AI security agents such as ARTEMIS show they can match or outperform human penetration testers at scale, while remaining far cheaper to run over time

- Identity and access analytics to flag unusual privilege use, impossible travel and suspicious device changes. Financial‑sector analyses from regulators and banks highlight AI‑driven identity analytics as a core defence against deepfake‑enabled payment fraud and synthetic identities

- Fraud risk scoring and anomaly detection for payments, logins and account changes. Surveys of banks and insurers consistently rank AI‑based fraud and anomaly detection as the most valuable security application, particularly where attackers are already using generative models to personalise scams

Keep people firmly in charge of high-impact decisions. Use AI to recommend and explain, not to act unilaterally, whenever actions:

- Move or release money.

- Change access, roles or key security controls.

- Modify production systems or critical configurations.

This human-in-the-loop pattern delivers speed without handing attackers a fully automated control plane if something goes wrong. It also reflects the direction of travel in financial and regulatory guidance, which stresses AI as a decision-support layer rather than an unsupervised “security brain”

Engineer guardrails into the architecture

Treat AI agents like privileged digital employees. Give them strong identities and tight permissions, not a magic bypass of existing controls:

- Enforce MFA, device and network checks, and continuous authentication for machine as well as human identities.

- Apply least privilege and just-in-time access so agents only touch what they need, when they need it.

- Define clear roles: which systems and actions each agent is allowed, and explicitly disallowed, to use.

Mediate what agents can do and see:

- Use policy-as-code to describe which tools an agent can invoke and which datasets it can access.

- Run agents in sandboxed environments with strict allow-lists for browsing and external calls.

- Add output filters to block sensitive data leakage or unsafe instructions before results reach users or downstream systems.

Security researchers tracking real‑world misuse are clear that poorly constrained agents can be turned into “technical consultants and active operators” for cybercrime, orchestrating end‑to‑end attacks rather than just writing code snippets. That is exactly the scenario these guardrails are designed to prevent.

Secure prompts, inputs and outputs

Prompts and tool calls are now part of the attack surface. Build safety into them from the start:

- Sanitise inputs, stripping active instructions and executable content from untrusted text, HTML and documents.

- Use tightly scoped system prompts that restate security policies, permitted tools and hard limits.

- Constrain retrieval and context-building to vetted, allow-listed knowledge bases rather than the open internet.

Treat prompt injection like phishing for machines:

- Test AI systems specifically for injection, manipulation and escalation scenarios.

- Limit what any agent can do based solely on natural-language instructions, even from apparently trusted sources.

Independent research on AI browsers and embedded assistants has already shown how hidden instructions in web pages, emails or screenshots can hijack agents, escalate their privileges and exfiltrate data, even when traditional sandboxing is in place. Treating prompts, memories and tool invocations as sensitive interfaces - not just UX details - aligns with emerging OWASP and NIST guidance on generative AI security.

Monitoring, testing and governance

AI security controls need the same rigour as any other critical system, with extra attention to behaviour over time.

Build continuous observability:

- Log prompts, model versions, tools invoked, decisions and outcomes for full traceability.

- Monitor agent behaviour in real time, with anomaly detection and instant kill switches for rollback.

Adopt threat-led testing:

- Include AI components and agents in regular red- and purple-teaming.

- Rehearse scenarios such as third-party outages, model failures and misbehaving agents so playbooks are ready before an incident.

Govern models and data as living assets:

- Apply model risk disciplines: validation, challenger models, performance SLAs, drift monitoring and planned retraining.

- Enforce data minimisation, masking and residency, and back these with robust vendor contracts.

- Tackle shadow AI through clear policies, approved tools and training that discourages unsafe data uploads.

Surveys of CISOs and regulators now treat AI‑driven attacks as a primary emerging risk, while also highlighting that most organisations lack mature processes for monitoring how models drift or fail in production. One recent industry review described AI security as moving from “trust but verify” to “never trust, always verify” for models and agents as well as people, with continuous evidence needed to prove controls are working as intended.

Culture, skills and future-proofing

Technology alone will not keep pace with adaptive attackers. Organisations need people and processes tuned to AI-era risks.

Prioritise upskilling and cross-functional teams:

- Combine AI, MLOps, security engineering and governance expertise in design and review.

- Update security awareness so staff recognise deepfakes, AI-crafted phishing and multi-channel scams.

Sander Schulhoff, a researcher focused on AI security gaps, argues that traditional teams often “secure the pipes but not the brains”, underestimating how models can be manipulated via prompts and context rather than code. Building literacy in prompt injection, model misuse and AI behaviour is now as important as patching and identity management.

Finally, design for a limited blast radius. Assume AI autonomy will rise:

- Segment systems so any single agent has constrained impact.

- Build rapid rollback, containment and graceful failure into workflows now, before you depend on them in a crisis.

By pairing focused use cases with engineered guardrails, continuous oversight and a prepared workforce, organisations can draw on AI’s defensive power without inheriting unacceptable new risks.

AI now sits on both sides of the fence in cybersecurity. It is amplifying phishing, deepfakes and automated exploitation, yet it is also the most powerful tool we have for faster detection, smarter analytics and scaled response. Standing still is not realistic - but rushing in without discipline simply trades old risks for new ones. As one FCA speech put it, this is a “pivotal moment” where AI can either strengthen or undermine security depending on how it is governed.

The priority is to use AI where it delivers measurable gains in speed, scale and insight, especially across detection, investigation and fraud prevention, while assuming that attackers are doing the same. Nation-state groups are already using general-purpose models to refine phishing, scripting and reconnaissance, and surveys of CISOs suggest AI‑driven attacks are now a top emerging threat.

That means acknowledging fresh exposure from autonomous agents, data leakage, model drift and opaque third-party features, then engineering those risks down rather than wishing them away - exactly the concern regulators like the FCA and industry bodies such as AXIS Capital now highlight in their AI–cyber risk work.

Core actions to take forward:

- Map where AI already exists in your stack - defensive tools and embedded SaaS features - and identify both gaps and blind spots. FCA cyber coordination guidance, for example, stresses how hard it is to see where suppliers have quietly embedded AI into controls.

- Start with a small number of governed, high-impact use cases with humans firmly in the loop for sensitive actions. Studies of security agents such as Stanford’s ARTEMIS show AI can outperform many human testers, but also that it still needs oversight to manage false positives and edge cases.

- Build out guardrails iteratively: identity- and data-centric controls, prompt and tool safety, continuous monitoring, testing and clear ownership. Research on securing large language models increasingly points to layered defences - API security, prompt-injection filtering and AI-on-AI monitoring - rather than relying on vendor guardrails alone.

Organisations that pair AI with strong security fundamentals and explicit accountability will be best positioned to withstand the next wave of AI-enabled threats.

Contact us today to learn how you and your team can make the most out of AI in protecting your business!

Frequently Asked Questions (FAQ)

Prompting, verification, workflow design, automation, and responsible AI use.

No. These skills apply across functions, from admin and marketing to finance and operations.

Clear examples of how you used AI to save time, improve quality, or support better decisions.

Begin with your current tasks, test AI on repeat work, and track the results.

Related Articles

L&D Insights

L&D InsightsAI and Digital Transformation: Are They the Same?

AI is often marketed as “digital transformation,” but it’s better understood as a powerful accelerator that only works well on top of strong digital foundations. This article clarifies the difference between digitalisation, digital transformation, and AI - then explains where they intersect, where they don’t, and why blurred thinking leads to costly pilots, vendor dependency, and weak governance. You’ll also get practical guidance for adopting AI responsibly through outcomes-first strategy, data readiness, operating model changes, and trust-focused controls.

L&D Insights

L&D InsightsVirtual Training Courses: Are They Worth It?

Instructor-led virtual training (ILVT) delivers live, interactive learning online, combining real-time instruction with tools like chats, polls, and breakout rooms. Compared with on-site training, ILVT often reduces costs, increases flexibility and access, and can match or outperform in-person results when well designed—especially for knowledge and many technical skills. The article outlines benefits for organisations, essential tech stacks, steps to launch ILVT effectively, and how emerging AI and micro-credentials enhance outcomes.

Tips & Tricks

Tips & TricksHow to Conduct an AI Proficiency Assessment

AI is now a baseline skill in knowledge work, so assessments must measure how people orchestrate AI - framing problems, prompting well, and pressure-testing outputs - rather than banning tools or relying on trivia. The article outlines how to design fair, authentic, role-based tasks that reflect real workflows and constraints, while capturing process evidence like prompt logs, iterations, and verification steps.