- L&D INSIGHTS/

- AI for HR: Smarter Hiring, Better Retention/

AI for HR: Smarter Hiring, Better Retention

- L&D INSIGHTS/

- AI for HR: Smarter Hiring, Better Retention/

AI for HR: Smarter Hiring, Better Retention

AI has moved from buzzword to business priority, and HR is no exception. Senior leaders now see AI as critical to staying competitive in the talent market, especially as skills needs shift faster than traditional hiring and development processes can keep up. Yet many HR teams are still experimenting on the margins, or wrestling with tools that do not quite match their needs. There is also a clear tension: leaders want to harness AI, but employees and HR professionals worry about job security, fairness and intrusive surveillance.

This article takes a practical, boundaries‑first view. It is about using AI to add speed and structure to hiring and retention, not replacing people with algorithms or turning workplaces into data‑driven panopticons. The most effective organisations treat AI as a force multiplier for HR: automating repetitive, rules‑based work so people can focus on judgement, relationships and culture. As one CHRO put it, AI should become “a collaborator that handles the grunt work while humans stay accountable for the calls that really affect people’s lives”.

Used well, AI can:

- Accelerate sourcing, screening, onboarding and everyday employee support

- Improve consistency and transparency in how decisions are prepared

- Surface risks and opportunities earlier, from skills gaps to burnout signals

But it must sit inside clear guardrails, with humans firmly accountable for the decisions that shape people’s careers and the culture they work in.

How AI is reshaping HR – with humans still in charge

AI is moving from isolated tools to something closer to “HR superagents”. Earlier generations focused on narrow tasks such as scanning CVs for keywords or auto‑scheduling interviews. Newer, agentic systems can plan and complete multi‑step HR workflows while staying under human supervision. Analysts at the Josh Bersin Company argue that these “AI‑powered superagents” will drive “the largest HR transformation in decades”, with many traditional processes automated but HR roles redesigned rather than removed.

Vendors, virtual colleagues and HR

Instead of jumping between disconnected apps, HR teams can interact with conversational agents that:

- answer payroll or policy questions in plain language

- draft contracts, emails and job adverts

- compile reports, spot anomalies in pay or turnover data

- trigger tasks such as raising tickets or updating records

Vendors such as ADP are already shipping persona‑based AI agents for HR, payroll and employee support, designed explicitly to “think, plan and take action with human oversight” rather than to replace managers.

Microsoft is taking a similar tack with Copilot “virtual colleagues” that automate background tasks like onboarding workflows while users retain control over decisions and guardrails. As one HR chief put it, the real shift is “from managing jobs to redesigning work”, with AI handling routine steps so people can focus on judgement, coaching and complex issues.

These capabilities demand an updated operating model. HR needs clear processes for when agents can act, when they must pause for approval, and which data they can access. That means explicit permissions, documented hand‑offs between AI and people, and closer partnership with IT and legal on governance.

What AI is good at – and what must stay human

AI is strongest wherever work is repetitive, rules‑based or data‑heavy. In HR this includes:

- triaging large applicant pools against agreed criteria

- generating first drafts of job descriptions, policies and feedback

- scheduling interviews, training and reminders

- answering routine employee FAQs

- surfacing patterns in retention, skills gaps or engagement

Tools that anonymise CVs or scan job adverts for exclusionary language are already used by almost half of UK recruitment agencies to make early‑stage screening more consistent and inclusive. At the same time, examples of automated screeners rejecting “hidden workers” because of rigid rules on gaps or keywords underline the risk of treating AI outputs as verdicts rather than inputs.

But it must not replace human judgement where context and nuance matter. Hiring decisions, culture and team fit, performance ratings, employee relations and any sensitive conversation should remain human‑led. A practical principle is:

- AI prepares, structures and suggests.

- Humans interpret, decide and explain.

Used this way, AI speeds up good practice rather than automating away responsibility.

Trust, skills and change management

Adoption will stall if people feel watched, replaced or judged by a “black box”. Many employees already worry about job security and surveillance, even as employers seek more AI‑literate HR teams. Recent research with senior HR and C‑suite leaders found that 70% are concerned about AI’s impact on job security in talent functions, even though most see it as essential for a competitive talent pool.

HR has to lead a different narrative: AI should remove admin drag and create space for more coaching, problem‑solving and strategic work, not simply load extra tasks onto already stretched teams. As Cisco’s Chief People Officer Kelly Jones warns, the worst mistake is to use efficiency gains “to just pile on more work” rather than redesign roles to focus on higher‑value activity.

Key priorities include:

- clear communication on where AI is used, why, and with what safeguards

- basic AI and data literacy for HR and line managers

- modelling responsible use in HR’s own workflows

There is also a longer‑term risk: as junior, process‑heavy tasks are automated, traditional “learn by doing” roles may disappear. HR leaders and regulators are already rethinking early‑career pathways so that graduates are not assessed on AI‑generated work and still build core skills and judgement AI cannot provide. Rather than hollowing out the early‑career pipeline, HR should redesign development routes - through structured rotations, projects and mentoring - so that future HR and people leaders still build the judgement AI cannot provide.

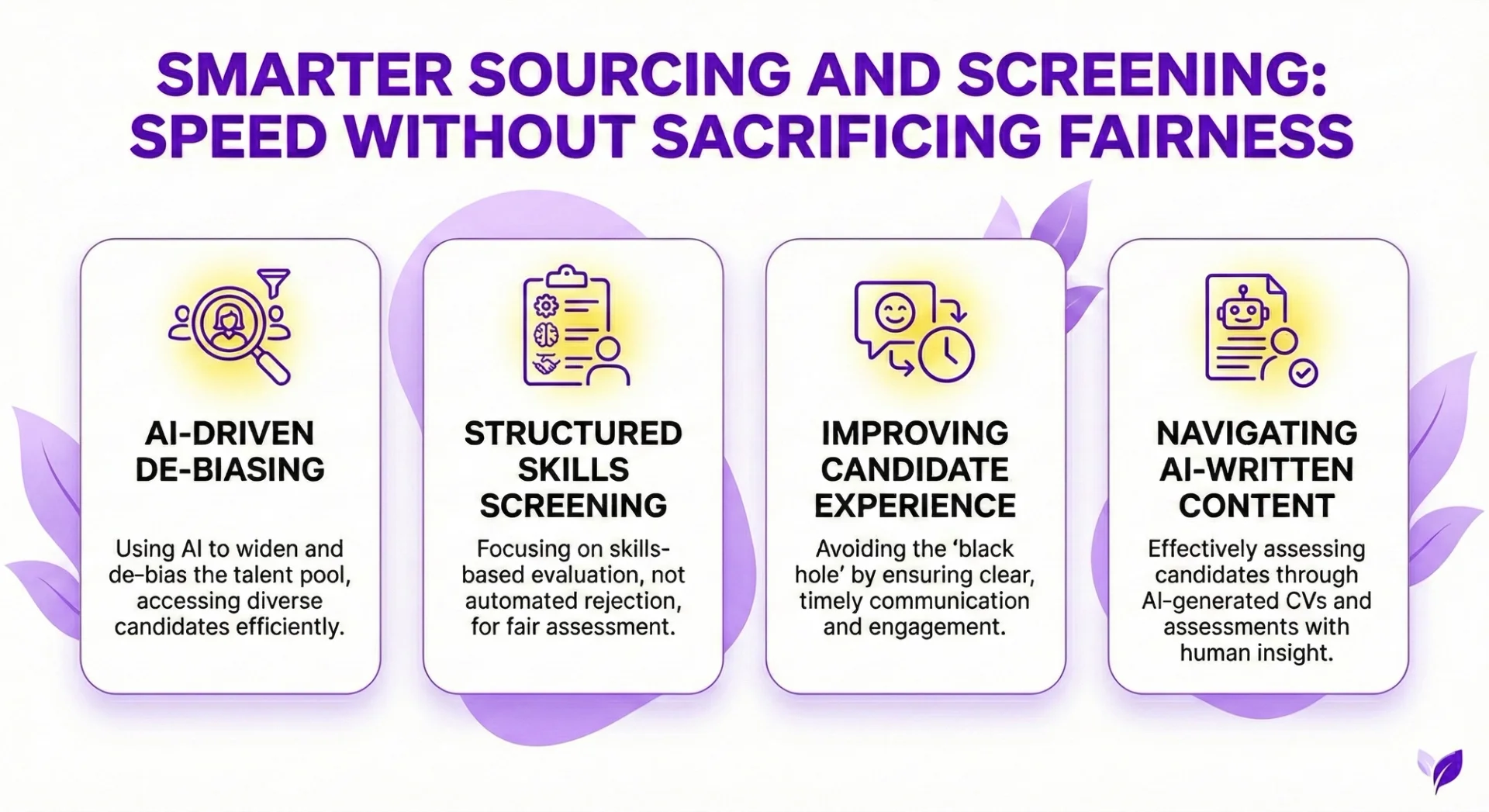

Smarter sourcing and screening: speed without sacrificing fairness

AI can dramatically cut the time spent finding and filtering candidates, but it should sharpen human judgement, not replace it. Used well, it widens the talent pool, reduces bias and keeps decisions transparent.

Using AI to widen and de‑bias the talent pool

Modern tools can scan job boards, social platforms and internal databases to surface people who match skills‑based criteria, including “passive” candidates who are not actively applying. This shifts focus from who has the most polished CV to who can actually do the work.

HR leaders at firms such as Salesforce and ManpowerGroup describe AI as a way to search wider and match on contributions and skills rather than job titles or linear career paths, opening up more non‑obvious candidates and non‑traditional trajectories.

AI can also make adverts more inclusive by spotting gendered or exclusionary wording and suggesting neutral, skills‑focused alternatives. In the UK, nearly half of recruitment agencies now use AI somewhere in their hiring process, and many use it specifically to flag biased language and expand access beyond traditional university or employer “pedigree”. Over time, this helps attract more diverse applicants and reduces self‑selection out of the process.

Anonymisation is another practical win. Systems can strip out identifiers such as:

- Name and contact details

- School and university

- Postcode and other location clues

so early screening is anchored in skills, experience and potential, not assumptions. Platforms like Greenhouse and Affinda already use this pattern to support anonymous applications and reduce the influence of unconscious bias at the first sift.

Structured, skills‑based screening – not automated rejection

AI works best when it supports a move away from blunt proxies like years in role or prestige employers. Instead, models can score candidates against clearly defined skills, outputs and learning agility, creating a more level playing field. In practice, large employers are already shifting this way: Salesforce, for example, has deliberately pivoted assessment away from total years of experience towards candidates’ ability to learn and adapt in role ([Business Insider interview with Salesforce talent leaders.

AI‑assisted shortlisting can help by:

- Applying standard rubrics and consistent questions

- Comparing candidates side‑by‑side against the same criteria

- Highlighting patterns recruiters might overlook at scale

The guardrail is simple: AI can create or rank a shortlist, but humans must review, challenge and make the final hiring decision. As one HR leader at Salesforce put it, AI should “augment recruiters’ capacity”, but “it was not to make a hiring decision” – a boundary that keeps nuance, context and culture fit firmly in human hands..

Avoiding the “black hole” candidate experience

Over‑automated filters risk excluding “hidden workers” whose CVs do not match neat patterns, and can reproduce bias if historic data are skewed. Harvard Business School research suggests rigid screening software has already shut out millions of viable candidates through rules such as automatic rejection for short employment gaps. To avoid this:

- Test models regularly for accuracy and bias, including sampling rejected CVs

- Build human‑in‑the‑loop checkpoints, such as reviewing rejections above a set threshold

- Explain to candidates where AI is used and how their data are handled

This preserves speed while maintaining fairness and trust, and aligns with emerging regulation that treats recruitment AI as “high‑risk” and expects it to be auditable, explainable and overseen by people rather than left to run as a black box.

Navigating AI‑written CVs and assessments

Many candidates now use generative AI to polish CVs and cover letters. Rather than trying to police this, shift emphasis to evidence of skills: work samples, practical tests and indicators of learning aptitude. The UK Financial Conduct Authority, for instance, explicitly requires that all assessment work is the candidate’s own, even as it normalises AI‑enabled tools like Microsoft Copilot in day‑to‑day wor. That distinction – AI as an assistive tool at work, but not a proxy for assessment – is a useful benchmark.

AI can help recruiters prepare for these conversations by:

- Turning job profiles into structured interview guides

- Suggesting objective, consistent question sets and scorecards

What must not change is who makes the call. Interviews, hiring judgements and discussions of fit remain human activities, with AI providing preparation and documentation, not replacing real conversations. As one senior HR voice put it, “AI is really good for structuring unstructured data, remembering things, and taking notes… But any conversation that requires emotional intelligence, please don’t use AI”.

Onboarding and employee support: AI as a 24/7 HR co‑pilot

AI can turn onboarding and day‑to‑day HR support into a smooth, predictable experience, while still leaving judgement, coaching and sensitive conversations to humans. As Cisco’s chief people officer Kelly Jones puts it, AI should be used to “give people a percentage of their day back”, not as a reason to pile on more work or remove the human element from HR.

Orchestrated onboarding journeys

New starters often lose days waiting for laptops, system access or basic answers. Information is scattered across emails, portals and PDFs, and managers juggle checklists in their spare time.

Agentic AI can act as an orchestration layer across these moving parts, using your existing systems. This mirrors what firms like Hitachi and Texans Credit Union have done by using AI assistants and automation to cut onboarding times by several days and slash access set‑up from minutes to seconds, while improving the day‑one experience for new hires.

- Triggering and tracking workflows for equipment, accounts, payroll, benefits and mandatory checks.

- Sending timely nudges to new hires and managers so nothing falls through the cracks.

- Answering routine questions on policies, benefits, offices and tools, grounded in your own knowledge base.

Vendors from Microsoft’s Copilot agents to specialist HR platforms such as Shapes now treat onboarding as a prime use‑case for “virtual colleagues” that quietly co‑ordinate tasks across IT, HR and facilities in the background. Done well, this shifts HR from chasing tasks to overseeing exceptions. To prove impact, teams should track:

- Time‑to‑productivity for different roles.

- Completion rates for key onboarding tasks and training.

- HR hours spent per hire on administrative work.

The aim is not a “robotic” day one, but a structured journey where people spend more time meeting colleagues and less time hunting for forms.

Always‑on HR helpdesk – with escalation to humans

Beyond onboarding, AI chatbots can provide a 24/7 front door for common HR queries: annual leave rules, hybrid working guidelines, expenses, or benefits eligibility. Because they draw only on approved content, answers stay consistent across locations and managers.

ADP, for example, now offers persona‑based HR and payroll agents that give employees and managers curated answers from each company’s own handbooks while keeping humans firmly in control of decisions. Analytics agents can also assemble standard HR reports on demand, or flag payroll anomalies such as missing tax details or eligibility checks, with clear steps for fixing issues.

Two safeguards are crucial:

- Clear escalation paths: any complex, ambiguous or sensitive query (grievances, health issues, pay disputes) must route quickly to a human.

- Audit trails: every action an agent triggers across HR systems should be logged for compliance and quality review.

These guardrails are increasingly non‑negotiable under emerging AI regulation, which treats HR and hiring tools as “high‑risk” systems that must be auditable, transparent and human‑supervised. This combination improves response times without turning HR into a black box run by bots.

Supporting retention, wellbeing and development

Once people are in role, AI can help HR spot risks earlier and personalise development, without slipping into surveillance.

Predictive analytics can surface patterns that merit a human look: spikes in absence, worrying survey scores, or teams with chronic overtime. SMEs are already using these techniques to flag burnout risks, coach managers whose style is driving disengagement and reduce costly voluntary turnover.

Similarly, skills data can highlight gaps and recommend targeted learning paths for individuals and teams, echoing how firms like IBM and Accenture use AI‑driven skills libraries to match people to learning and internal opportunities.

AI can also streamline performance and development processes by:

- Drafting self‑assessments or review summaries from existing feedback for people to edit, not accept blindly.

- Recommending curated learning content based on role, skills and career aspirations.

Clear red lines protect trust:

- No intrusive monitoring such as keystroke tracking or emotion recognition – approaches that regulators are increasingly moving to restrict or ban in employment settings.

- Performance ratings, promotions and disciplinary decisions must remain human‑led, with AI limited to preparation and evidence gathering.

Used this way, AI supports retention and growth by making it easier to intervene early and to tailor development - while keeping people, not algorithms, in charge of careers.

Guardrails, governance and choosing the right tools

AI can make hiring and employee support faster and more consistent, but only if it sits inside clear guardrails. HR teams need a basic grasp of the regulatory backdrop, a practical governance model, and robust criteria for tool selection and skills development.

Regulatory and ethical backdrop HR teams must understand

Rules are tightening around AI in HR. Many workplace tools now fall into “high‑risk” categories, with requirements for documentation, transparency and human oversight. Privacy laws also constrain how employee and candidate data may be collected, stored and analysed.

Across regions, the themes are similar:

- You must be able to explain and evidence how AI is used in decisions.

- Candidates and employees should be told when AI is involved and what it does.

- Emotion‑reading tools and intrusive monitoring are increasingly restricted or discouraged.

Under the EU AI Act, for example, recruitment, screening and performance tools are explicitly classified as high‑risk systems, with bans on workplace emotion‑inference and demands for auditable, transparent models and bias controls in HR use cases.

In the US, laws such as New York City’s AEDT rules and Illinois’ AI Video Interview Act require bias audits, disclosure and, in some cases, informed consent when AI is used in hiring.

For HR, this means treating AI as part of regulated people‑management, not as a side experiment. Consent, clear privacy notices and the ability to contest outcomes all need to be designed in from the start, with processes capable of explaining why a candidate was screened out or how AI‑generated insights fed into a promotion or performance outcome.

Practical governance for AI in HR

Governance works best as a joint HR–IT responsibility with defined owners, not as an informal side job. A simple but disciplined framework should include:

- Data hygiene: standard job and skills libraries, de‑duplicated records, and regularly refreshed HR data so AI is not amplifying past errors.

- Bias testing and audits: scheduled checks on shortlists, recommendations and risk flags, with results fed back into model tuning and policy updates.

- Human‑in‑the‑loop checkpoints: clear rules that final calls on hiring, promotion and termination remain with people managers.

- Use‑case policies: written guidance on acceptable and prohibited uses (for example, no AI‑only hiring decisions, no covert productivity tracking).

- Incident response: a playbook for pausing tools, investigating and communicating if outputs appear discriminatory or incorrect at scale.

Summarised:

- Define ownership (HR + IT).

- Clean and standardise data.

- Test for bias and document results.

- Keep humans in charge of consequential decisions.

- Plan for failures before they happen.

Organisations that approach AI as a workforce transformation rather than a narrow tech rollout are already formalising these structures. Research from Josh Bersin’s firm argues that HR should “own the people and ethics side of AI deployment”, with super‑agent architectures governed through clear risk, audit and escalation mechanisms rather than left to individual teams to improvise.

Similarly, case studies from BCG show HR leading integrated AI platforms across recruitment and performance, backed by an enablement network and explicit human control over ratings and promotion decisions.

Selecting AI tools for HR: beyond shiny features

Start from your priority workflows - such as sourcing, screening, onboarding, helpdesk and analytics - then look for tools that meaningfully improve them, rather than building a random collection of apps.

Key evaluation questions:

- Capabilities and fit: Does it support your core use cases and integrate smoothly with your HRIS/ATS and collaboration tools?

- Data protection and security: Are encryption, granular access controls, audit logs and regional hosting options in place to meet your legal and internal standards?

- Governance features: Can you see why the system made a recommendation, configure role‑based permissions and mandate human review before actions are taken?

- Vendor stance on ethics and compliance: Do they provide documentation, support impact assessments and show how they manage bias and updates over time?

Vendors at the forefront of HR tech are increasingly foregrounding these points. ADP, for instance, describes its AI agents as assistants that “think, plan and take action with human oversight”, built on “security and privacy by design with ethical AI principles embedded at the foundation” and with managers retaining authority over hiring, pay and performance decisions.

Microsoft is taking a similar line with Copilot agents: they can orchestrate onboarding and internal support workflows, but are configured through Copilot Studio with strict boundaries on which data they can access and what actions they are allowed to perform.

Avoid over‑personifying AI agents as “virtual colleagues”. Treat them as systems with tightly defined roles, limits and accountability, just like any other critical business platform. The backlash when one HR platform attempted to give “digital workers” full employee records and managers inside its system underlines how quickly trust erodes if tools are framed as pseudo‑employees rather than governed infrastructure.

Building AI and data literacy in HR

Responsible adoption depends on HR understanding how these tools work and where they fail. Teams do not need to become data scientists, but they do need confidence in several areas:

- How models learn from historic data and how bias can enter or be amplified.

- Which questions to ask vendors and internal data teams about training data, evaluation and safeguards.

- How to interpret AI‑generated insights, push back on weak recommendations and escalate concerns.

Across sectors, leading employers are already making AI literacy a standard part of onboarding and early careers training. Financial firms such as JPMorgan and Citi, for example, now include prompt design and AI‑tool fluency for new joiners, coupled with explicit guidance on when not to rely on AI and how to check for hallucinations or regulatory pitfalls.

More broadly, organisations that scale generative AI successfully tend to give HR a central role in redesigning jobs, running safe “sandboxes” for experimentation, and building the skills to use AI critically rather than passively.

You can build this capability through short courses, peer learning circles and joint HR–IT working groups. Many organisations are also creating roles such as AI adoption leads or responsible AI champions within HR to coordinate practice, training and oversight as AI use scales.

Recap: AI as a force multiplier, not a replacement for HR

Used well, AI gives HR more structure, speed and visibility across hiring and retention. It can:

- sift applications and highlight skills matches

- orchestrate onboarding tasks and answer routine questions

- surface signals on engagement, skills gaps and burnout risk

But the organisations seeing real value are not handing decisions to algorithms. They are pairing AI with strong governance, clear decision rights and investment in HR and manager skills. Recent research, for instance, finds nearly half of UK recruitment agencies now use AI primarily to standardise early screening while “using AI as an aid, rather than a replacement for human judgment” in final hiring decisions.

Similarly, large employers rolling out AI assistants in HR emphasise that tools should “empower and accelerate people at work” rather than make people decisions on their behalf. As one chief people officer put it, HR is moving “from managing jobs to redesigning work”, with AI taking on repetitive tasks while humans stay accountable for judgement, culture and sensitive calls. AI prepares the ground; humans still judge potential, culture fit and sensitive cases.

A simple roadmap for HR teams

A practical way to start is to keep scope narrow and control high:

- Step 1: Map pain points across sourcing, screening, onboarding and support; pick one or two focused pilots where delays or inconsistency are obvious. Onboarding is often a good candidate: case studies from Hitachi and Texans Credit Union show AI assistants and automation cutting onboarding time by days while improving day‑one readiness for new hires

- Step 2: Bring in IT, legal and employee representatives early to set guardrails, privacy standards and clear, honest communication. Regulators such as the EU now treat recruitment and evaluation tools as “high‑risk” AI that must be auditable, transparent and subject to human oversight, so cross‑functional governance is not optional but expected.

- Step 3: Select tools that are transparent, auditable and integrate with existing systems, with explicit human‑in‑the‑loop checkpoints. Leading adopters are consolidating fragmented HR stacks and embedding AI across them so HR can see and challenge how algorithms influence sourcing, screening and development decisions

- Step 4: Build AI literacy for HR and managers, then gather feedback from candidates and employees to refine or roll back where needed. Organisations that scale AI most effectively tend to treat HR as a testbed, giving teams safe space to experiment with AI on real workflows while staying clear that “the goal is an equal partnership between human beings and AI” rather than full automation.

Smarter hiring and better retention come when AI removes friction, not humanity. Let AI handle the repeatable work so HR and managers can do what only people can: listen carefully, coach thoughtfully, judge fit fairly and build workplaces where people genuinely want to stay.

Frequently Asked Questions (FAQ)

Prompting, verification, workflow design, automation, and responsible AI use.

No. These skills apply across functions, from admin and marketing to finance and operations.

Clear examples of how you used AI to save time, improve quality, or support better decisions.

Begin with your current tasks, test AI on repeat work, and track the results.

Related Articles

L&D Insights

L&D InsightsAI and Digital Transformation: Are They the Same?

AI is often marketed as “digital transformation,” but it’s better understood as a powerful accelerator that only works well on top of strong digital foundations. This article clarifies the difference between digitalisation, digital transformation, and AI - then explains where they intersect, where they don’t, and why blurred thinking leads to costly pilots, vendor dependency, and weak governance. You’ll also get practical guidance for adopting AI responsibly through outcomes-first strategy, data readiness, operating model changes, and trust-focused controls.

Tips & Tricks

Tips & TricksAI in Cybersecurity: Where It Helps, Where It Hurts, and How to Use It Safely

AI is now central to cybersecurity because it can triage overwhelming alert volumes, speed investigations, and surface weak signals humans miss. But the same capabilities are powering more convincing phishing, deepfake-enabled fraud, agentic attacks, and new risks like prompt injection and model supply-chain compromise. The safest path is tightly scoped use cases with human approval for high-impact actions, plus strong identity controls, data boundaries, continuous monitoring, and testing.

Tips & Tricks

Tips & TricksHow to Conduct an AI Proficiency Assessment

AI is now a baseline skill in knowledge work, so assessments must measure how people orchestrate AI - framing problems, prompting well, and pressure-testing outputs - rather than banning tools or relying on trivia. The article outlines how to design fair, authentic, role-based tasks that reflect real workflows and constraints, while capturing process evidence like prompt logs, iterations, and verification steps.