- L&D INSIGHTS/

- AI and Digital Transformation: Are They the Same?/

AI and Digital Transformation: Are They the Same?

- L&D INSIGHTS/

- AI and Digital Transformation: Are They the Same?/

AI and Digital Transformation: Are They the Same?

Open almost any tech headline and you will see “AI‑powered digital transformation”, “AI‑first digital strategy”, or even “we’ve completed our AI transformation”. The terms are increasingly blurred, and in the rush to sound current, many organisations quietly rebadge long‑running cloud or automation programmes as “AI initiatives”. When everyone claims to be “doing AI”, it becomes hard to tell what is genuinely new from what is simply a fresh label on familiar digital projects.

This confusion hides an important strategic question: is AI just the latest wave of digital, or something fundamentally different? The position in this article is clear: AI is not the same as digital transformation. It is a powerful accelerator within it – but without solid digital foundations, AI merely adds cost, risk, and dependency.

Treating generic AI tools as a transformation in their own right leads to convergence, where every firm effectively buys the same brain and edges disappear – a concern echoed by commentators who argue that standardised AI services erode competitive advantage when everyone uses near‑identical models and workflows.

For leaders and teams, the distinction is practical, not semantic. Blurred thinking tends to produce:

- Misplaced investment in eye‑catching pilots that never scale

- Over‑reliance on vendors and loss of internal capability

- Fragmented experiments that fail to change how work is actually done

The rest of the article will unpack this. We will define AI and digital transformation in plain terms, separate where they differ, explore where they truly intersect, outline both benefits and risks, and offer concrete guidance for adopting AI responsibly as part of a broader digital journey - not as a shortcut that skips the hard work.

What Do We Mean by “Digital” and by “AI”?

Before we can ask whether AI and digital transformation are the same, we need to be precise about the terms. Many organisations blur them together, which is why strategies stall, investments disappoint, and risk quietly accumulates.

Digital transformation and digitalisation

Digitalisation is the narrowest idea: it is about converting analogue processes and services into digital ones. Swapping paper forms for online forms, call‑centre queues for web chat, or wet signatures for e‑signatures all count as digitalisation. The work mostly focuses on efficiency and convenience.

Digital transformation goes further. It is the deliberate rewiring of how an organisation creates value, makes decisions, and works day to day, using digital technology as the organising principle rather than as a bolt‑on. Industry commentators increasingly describe this as moving from “IT projects” to building an enduring, digital‑first operating model that can flex as markets, regulation, and customer expectations change.

That typically includes:

- Modernising core systems (cloud platforms, SaaS, ERP, CRM) so data can flow and scale.

- Building data platforms and analytics so decisions use real‑time evidence, not intuition alone.

- Designing coherent digital experiences across web, mobile, contact centres, and branches.

- Automating processes with workflow tools and robotic automation, not just patching manual gaps.

- Investing in governance, skills, and culture so people can adopt new tools safely and confidently.

Analysts of AI‑enabled transformation warn that under‑investing in those “hidden” elements – data readiness, process redesign, culture, and governance – is a common reason digital programmes fail to deliver on their promise. As one practitioner puts it, “the real cost lies less in software and cloud, and more in the organisational capability you build around them.”

In short, digitalisation changes the tools; digital transformation changes the organisation.

What is artificial intelligence in this context?

Artificial intelligence, in this article, means systems that can carry out tasks we would usually expect humans to do: seeing, listening, reading, reasoning, predicting, and planning.

Useful categories for business readers are:

- Reactive and predictive systems: scoring credit or fraud risk, recommending products, or forecasting demand based on patterns in data.

- Machine learning and deep learning: models that learn from examples, from image recognition in insurance claims to anomaly detection in trading.

- Generative AI: models that can create text, code, images, audio, or video on demand, supporting content creation and complex analysis.

- Agentic AI: emerging “digital workers” that chain steps together, move across tools, and pursue a goal end‑to‑end, such as resolving a customer complaint or preparing a regulatory report. In financial services, for example, banks are already piloting agentic systems as “digital sidekicks” that manage routine operational work while humans focus on higher‑judgement activities.

The common thread is that AI systems adapt with data, rather than following only fixed, hand‑coded rules. As regulators such as the UK Financial Conduct Authority have emphasised, this makes AI both a powerful enabler of new, data‑driven business models and a technology that demands stronger oversight of data quality, bias, and model risk.

How AI fits within digital transformation

AI sits inside digital transformation; it does not replace it. Effective AI depends on the very foundations that digital programmes are meant to build:

- Clean, governed data and shared data platforms.

- Modern, connected applications and networks.

- Secure, observable infrastructure that can operate at machine speed.

Without those basics, AI tends to remain a shiny point solution, hard to scale and harder to govern. With them, AI becomes a powerful accelerator: automating complex work, amplifying human judgement, and enabling new products and services that competitors cannot easily copy.

Commentators on the “next great transformation” argue that AI will be most transformative when it builds on robust digital infrastructure and augments, rather than attempts to replace, human decision‑making.

So, at a high level:

- Digital transformation is the broad rewiring of how an organisation operates in a digital world.

- AI is a set of capabilities that can deepen and speed up that rewiring, but cannot stand in for it.

Why the confusion persists

The line between AI and digital transformation often gets muddied.

Marketing narratives frequently badge any software upgrade as “AI‑powered”, encouraging the belief that buying a tool equals transformation. Vendors sell generic models as if they were strategy in a box, masking the need for data, process, and workforce investment. Under pressure for quick wins, leaders rebrand website refreshes, cloud migrations, or chatbot pilots as “AI programmes” to signal modernity.

The result is predictable:

- Over‑promising on what AI alone can deliver.

- Under‑investing in data quality, governance, and infrastructure.

- Neglecting skills, culture, accessibility, and inclusion, which determine whether benefits reach people fairly or simply widen existing digital inequalities.

Separately, there is a growing risk that organisations “buy the same brain” as their competitors by leaning too heavily on identical off‑the‑shelf models, eroding genuine differentiation and deepening dependence on a small number of AI vendors.

Distinguishing “digital” from “AI” at the outset helps avoid those traps and sets a more realistic path for value and resilience.

How AI Changes – But Does Not Replace – Digital Transformation

From digitising workflows to augmenting workforces

Early digital transformation focused on tidying up the back office: standardising processes, automating repeatable steps, and offering basic self‑service. The aim was efficiency - fewer hand‑offs, fewer errors, lower cost.

Agentic AI shifts the centre of gravity from workflows to workforces. Instead of scripts that automate a single step, organisations can now deploy “digital workers” that plan, reason and act across systems. As Finextra notes, agentic AI behaves less like a macro and more like a “digital workforce” that can understand context, decompose goals and operate across tools end‑to‑end. A single agent might:

- Triage customer e‑mails, extract intent and priority

- Pull data from multiple systems, update relevant records

- Draft replies, ask for human sign‑off where required

- Raise exceptions or escalate complex cases

This creates a hybrid labour model. People concentrate on judgement, relationships and edge cases; agents handle multi‑step execution at machine speed. Cisco’s Anurag Dhingra describes this as AI doing “human‑like work at machine speeds” while humans retain the unique qualities that define us.

For transformation, this means the design problem changes. It is no longer enough to redraw process maps; leaders must rethink:

- Role definitions and career paths in human–AI teams

- New performance measures (e.g. quality of supervision, not just volume processed)

- Clear escalation paths and liability when agents act autonomously

Digital transformation thus expands from “how work flows” to “how humans and agents work together”, with the network, data and operating model all tuned for this kind of augmented workforce.

Commoditisation versus differentiation

As advanced models become widely available, many firms effectively “buy the same brain”. Basic capabilities - summarisation, code generation, generic chatbots - rapidly become hygiene factors rather than sources of edge. As one think‑tank leader put it in Business Insider, “If you and your competitor are all using the same service, you have no edge over each other.”

Advantage now comes from what you wrap around these commodities:

- Proprietary dat – tuning and grounding models in your own operational, customer and risk data.

- Process integration – embedding AI into core systems and end‑to‑end workflows, not just adding a chatbot layer.

- Governance and trust – guardrails, auditability and alignment with risk appetite, which are especially valuable in regulated sectors.

In financial services, for example, the FCA’s AI strategy emphasises data‑led supervision, synthetic data and robust model governance as differentiators, not just the use of generic models. Similarly, banking leaders argue that trust and governance are now as important as the technology itself when turning AI into a strategic differentiator.

These differentiators depend on strong digital foundations. Without clean data, well‑owned processes and modular platforms, it is difficult to turn generic AI into something distinctive and defensible

Data and infrastructure as non‑negotiable foundations

AI exposes the strengths and weaknesses of previous digital programmes. Where data quality, lineage and accessibility were treated as secondary, AI performance now stalls or behaves unpredictably.

Foundations that increasingly become non‑negotiable include:

- Robust data governance, catalogues and access controls

- Scalable, secure cloud platforms and data pipelines

- Low‑latency networks able to support real‑time, agentic workloads

- Deep observability and monitoring, extending into “AgenticOps” for tracking how agents behave in production

Industry research shows most organisations are already feeling this pressure: Cisco‑commissioned work reports that over 90% of enterprises are increasing network investment to cope with AI workloads, and a large majority say current data centres are at or beyond capacity.

In regulated sectors, supervisors are likewise building AI‑ready infrastructure - processing billions of transactions and web records a month - to move from reactive to proactive oversight.

In practice, AI is turning digital plumbing and data discipline from “nice to have” into core strategic infrastructure.

Economics, ROI, and the cost curve

AI spending is often judged by visible costs: licences, model access, cloud utilisation. Yet the decisive costs - and ultimately the drivers of return - sit elsewhere:

- Redesigning processes to exploit agentic capabilities

- Change management, training, and new skills for supervising agents

- Data remediation and strengthened governance

Analysts tracking AI‑driven transformation consistently highlight these “hidden” investments in data, operating model and culture as the main determinants of value creation. Treating AI purely as a technology line item, rather than a long‑term organisational capability, is one of the main reasons many pilots fail to scale.

This means AI is an accelerator, not a shortcut. In the short term, costs may rise as organisations modernise platforms and upskill people. Over time, the pay‑off shows up as:

- Fewer errors and rework

- Faster, more consistent decisions

- Better customer journeys

- More resilient, predictable operations

The key takeaway: AI can dramatically lift the returns on digital transformation, but only where the digital groundwork - data, infrastructure, processes and people - has already been laid and is actively being improved.

Getting Practical: From AI Curiosity to Responsible Digital Change

Start with outcomes, not tools

The risk with AI is treating it as a shortcut to “modernisation” rather than as one ingredient in a broader digital shift. Begin with the transformation agenda, not the model catalogue. As one financial services leader puts it, “the organisations that get the most out of AI will not be those who implement it the quickest; it will be those who use it effectively with thoughtful controls and parameters in place.” That starts with clarity on why you are using it at all.

Clarify, in plain terms, what you are trying to change:

- Which outcomes matter most: reduced operational risk, quicker onboarding, fewer errors, better accessibility, more resilient services?

- How do these map onto your existing digital roadmap, data strategy, and risk appetite?

Once the outcomes are clear, test where AI genuinely adds differentiated value rather than recreating what standard automation can already do. Commentators have warned that simply “buying the same brain” as everyone else risks eroding competitive edge if you do not connect AI tightly to proprietary processes and data, rather than generic use cases shared with rivals. AI is strongest where work is pattern‑rich and knowledge‑heavy:

- Classification, prediction, summarisation, content and code generation.

- Multi‑step tasks that can be safely automated or co‑piloted by agents, with humans handling exceptions, relationships, and oversight.

This prevents “AI everywhere” thinking and keeps investment focused on operational impact, not experiments that quietly stall. It also aligns with a growing consensus in digital transformation research that value emerges from end‑to‑end process change and capability building, not tool deployment alone.

Build an AI‑aware digital strategy

Treat AI as a design input to your digital estate, not a bolt‑on widget. Re‑examine customer journeys, services, and back‑office flows as human–AI systems: where should agents triage, draft, or monitor, and where must humans decide, explain, and own the outcome?

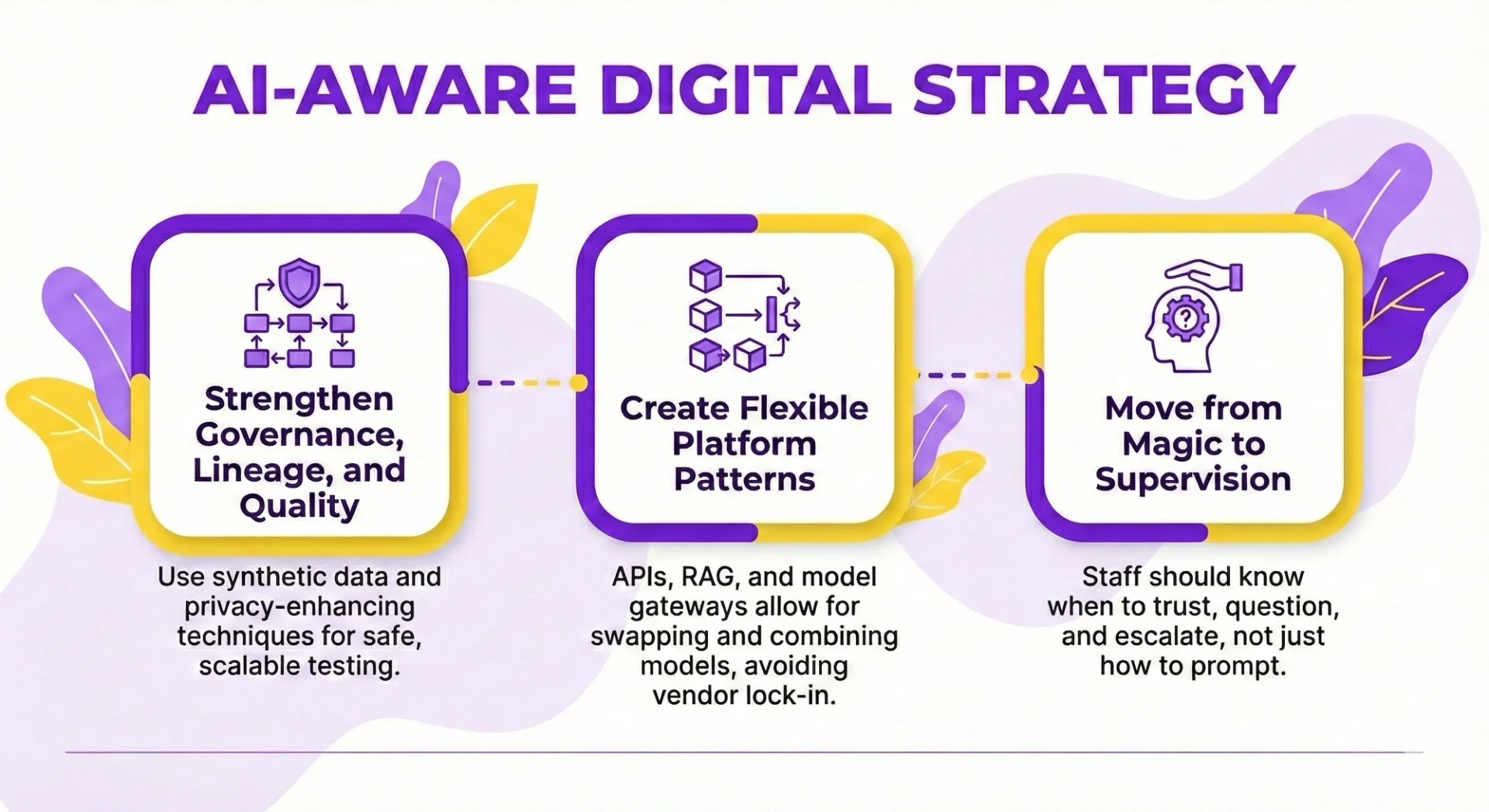

An AI‑aware digital strategy lines up several essential elements:

- Data: strengthen governance, lineage, and quality; use synthetic data and privacy‑enhancing techniques so you can test safely at scale. Regulators such as the UK Financial Conduct Authority now explicitly promote synthetic data and privacy‑enhancing technologies as enablers for safe innovation at scale, underlining that these are becoming baseline expectations rather than “nice‑to‑haves”.

- Architecture: create platform patterns - APIs, retrieval‑augmented generation, model gateways - that allow you to swap or combine models, avoiding over‑dependence on a single vendor. Industry analysts increasingly distinguish between generic foundation models and the differentiating value layer built from proprietary data and orchestration.

- Skills and culture: move from “AI as magic” to “AI as a tool I supervise”. Staff should know when to trust, question, and escalate, not just how to prompt. Banks, for example, are investing in AI academies and leadership programmes to build exactly this supervisory mindset alongside technical skills.

Summarised, strategy should ensure:

- Generic models are treated as commodities.

- Advantage comes from how you fuse AI with proprietary data, processes, and trust controls.

New roles, skills, and ways of working

AI‑enabled digital change reshapes both workflows and workforces. You will need roles that did not exist in traditional transformation programmes:

- AI product owners to align use cases with business outcomes and risk.

- Agent supervisors to monitor performance, handle exceptions, and adjust guardrails.

- Prompt and interaction designers to make human–AI collaboration usable and inclusive.

These roles sit inside cross‑functional teams, combining domain experts, engineers, data scientists, risk and compliance. Governance, escalation logic, and performance metrics must reflect a hybrid labour model in which digital agents execute routine steps while humans retain accountability. This mirrors wider moves towards “digital workforces” where agentic AI systems operate across tools and channels while humans focus on relationship‑heavy and judgment‑intensive work.

Inclusion, accessibility, and user trust

AI can be a powerful accessibility accelerator—automated captions and alt text, adaptive interfaces, translation, content simplification, and support for different input modes. But poorly designed systems risk entrenching new digital divides.

Commentators on disability and technology note that the same AI that “supercharges accessibility” can just as easily widen digital inequalities if accessibility is bolted on late or omitted from model training and interface design.

Build inclusion into your digital and AI design practices:

- Involve diverse users early; test AI outputs and interfaces for accessibility, fairness, and error patterns.

- Use open tools and benchmarks to check AI‑generated content and code for accessibility gaps, and feed issues back into model and UX design. Initiatives such as open‑source accessibility checkers for AI‑generated code demonstrate that systematic benchmarking is both possible and increasingly expected.

Trust then becomes a strategic asset rather than a compliance chore:

- Explain clearly how AI is used, what data it relies on, and how it is governed.

- For decisions that affect rights or wellbeing, provide simple escalation to a human, transparent reasoning where possible, and easy opt‑outs.

- Done well, this combination - outcomes‑first thinking, AI‑aware architecture, new skills, and inclusive design - turns AI from a destabilising add‑on into a disciplined extension of your digital transformation.

Governance, Risk, and Resilience in an AI‑Rich Digital World

As AI shifts from experiment to core infrastructure, governance can no longer be a compliance afterthought. The question for digital leaders is not simply “is it regulated?” but “does it reliably deliver the outcomes we claim, for the people we serve?”

Outcomes‑based governance, not box‑ticking

Regulators are modernising their own toolkits with data lakes, synthetic data and sandboxes, and expect firms to match that sophistication. The FCA, for example, is explicit about moving towards *data‑led, digitally enabled regulation* with permanent digital sandboxes and synthetic data environments to “spot and stop harm faster” in live markets, not just in pilots. Governance is moving from paperwork to provable outcomes: fairness, robustness, explainability and consumer protection in real use, not just in the lab.

Within digital programmes, effective AI governance means treating models as living assets:

- Maintaining an inventory of models and their criticality.

- Classifying risks by use‑case, not just by technology.

- Testing routinely for bias, robustness and security vulnerabilities.

- Monitoring for drift, performance decay and unintended behaviours.

Clear accountability is essential when humans and AI share decisions. Firms need explicit answers to who designs, who approves, who supervises and who is liable when things go wrong. In financial services, senior leaders are already being challenged to align AI use with conduct rules and emerging model risk expectations, reinforcing that “AI sits at the core of innovation and underpins many new and transformed business models”, rather than being a side project. In a hybrid workforce of people and agents, escalation paths and override rights become central design choices, not footnotes.

Managing risk and dependency

AI concentrates risk in new ways. Relying on a single provider or foundation model can leave organisations exposed on resilience, pricing and strategic independence, especially as cognitive work is offloaded to tools. As one think‑tank leader put it, if every firm “buys the same brain”, competitive edges erode and dependency deepens.

Resilient digital strategies therefore prioritise:

- Data and model portability, with contracts and architectures that make switching feasible.

- Abstraction layers and multi‑model set‑ups, so components can be swapped without rewiring the whole estate.

- Retaining deep domain expertise in‑house, rather than hollowing out knowledge as subscriptions rise.

- Human‑in‑the‑loop checkpoints for high‑impact or sensitive decisions, ensuring that judgement and accountability remain human even where execution is automated.

This is not anti‑vendor; it is about optionality and continuity in an environment where both capabilities and rules are evolving at speed. Banks exploring agentic AI “digital sidekicks” are already stressing that the real differentiator is not generic tooling but how it is governed, wired into proprietary data and supervised by skilled people.

In parallel, experienced practitioners in digital transformation caution that the true long‑term cost of AI lies in governance, skills and organisational resilience, not just licences and cloud spend - another reason to design out single points of failure before they are embedded.

Balancing innovation with safety and ethics

As AI amplifies digital reach, it also amplifies harm if poorly designed: bias and discrimination at scale, privacy intrusions, disinformation, and misuse of autonomous agents in financial crime, scams or targeted persuasion. Commentators on the “next great transformation” in AI governance point to familiar fault lines - algorithmic bias, data‑driven surveillance, dual‑use cyber tools - as evidence that ethics and safety must be treated as hard constraints, not optional extras.

Embedding ethics into digital transformation means building safeguards into the change process, not polishing them on top:

- Structured impact assessments for AI use‑cases, examining who is affected, what could go wrong and how harm would be detected.

- Co‑design with affected groups, including disabled users and communities at higher risk of digital exclusion, so edge cases are treated as design inputs rather than afterthoughts. Accessibility experts warn that if inclusion is deferred in the rush to deploy generative AI, inequalities can be locked into the very code that powers future digital services.

- Active alignment with emerging AI and digital regulations in each sector, connecting model lifecycle controls to tangible outcomes such as fair lending, safe diagnostics or trustworthy public services.

The organisations that treat trust, safety and inclusion as core design constraints - rather than innovation brakes - are the ones most likely to turn AI from a commoditised utility into a durable competitive advantage.

So - Are AI and Digital Transformation the Same?

No. Digital transformation is the wider, ongoing shift to becoming a digital‑first organisation; AI is a powerful, fast‑moving set of tools that can accelerate that shift, but it cannot replace it. As one senior regulator at the FCA put it, AI now “sits at the core of innovation and underpins many new and transformed business models” – but it is still only part of a broader digital agenda, not a substitute for it.

Treat access to generic AI models as table stakes. Meaningful advantage comes from how you combine AI with your own data, systems, governance, and people. Analysts are already warning that firms “buying the same brain” risk eroding their competitive edge when everyone uses identical off‑the‑shelf tools, rather than building distinctive capabilities on top of them. That demands sustained investment in:

- Solid foundations: architecture, data quality, skills, culture, and inclusive design, including accessibility and equity considerations baked into AI and digital experiences from the outset

- Robust governance: safety, fairness, resilience, and trust, not just speed and savings, backed by clear operating models for AI, model risk management, and ongoing oversight

Organisations that equate AI with digital transformation risk shallow change, vendor dependency, and copy‑and‑paste strategies – a pattern already visible where generic tools are adopted as a shortcut instead of tackling legacy systems, processes, and culture. Those that see AI as part of a broader digital journey can build distinctive, human‑centred capabilities that endure, even as the underlying technologies continue to evolve and as AI shifts from isolated tools to embedded “digital sidekicks” and agentic systems across the enterprise.

Contact us today to learn more about how you can use AI and digital technologies to level up your team and business!

Frequently Asked Questions (FAQ)

Prompting, verification, workflow design, automation, and responsible AI use.

No. These skills apply across functions, from admin and marketing to finance and operations.

Clear examples of how you used AI to save time, improve quality, or support better decisions.

Begin with your current tasks, test AI on repeat work, and track the results.

Related Articles

L&D Insights

L&D InsightsLiteracy vs Digital Literacy: What Are the Differences?

Traditional reading-and-writing literacy has broadened into digital literacy—the ability to locate, evaluate, create, and communicate information safely and effectively through technology. The article traces this evolution from basic “computer literacy” of the 1990s to today’s multifaceted concept that spans information, media, and AI literacy. Digital skills now underpin social inclusion, workforce readiness, and democratic participation, while gaps in access, competence, and support exacerbate inequality.

Tips & Tricks

Tips & TricksHow to Conduct an AI Proficiency Assessment

AI is now a baseline skill in knowledge work, so assessments must measure how people orchestrate AI - framing problems, prompting well, and pressure-testing outputs - rather than banning tools or relying on trivia. The article outlines how to design fair, authentic, role-based tasks that reflect real workflows and constraints, while capturing process evidence like prompt logs, iterations, and verification steps.

Tips & Tricks

Tips & TricksCopilot vs. ChatGPT vs. Gemini: How to Choose the Right AI Assistant for Your Task

Microsoft Copilot, OpenAI’s ChatGPT, and Google’s Gemini are leading AI assistants, each excelling in different environments. Copilot integrates deeply with Microsoft 365 to automate documents, data analysis, and email, while ChatGPT shines in open-ended conversation, creative writing, and flexible plugin-driven workflows. Gemini prioritises speed and factual accuracy within Google Workspace, offering powerful research and summarisation capabilities. Choosing the right tool depends on your ecosystem, need for customisation, and whether productivity, creativity, or precision is the top priority.